Special thanks to Marc Popp for his invaluable insights and help putting this blog together.

How we found a way to slash our AWS costs by creating full transparency

It’s become more convenient than ever to use cloud computing as the go-to option to build your own data analytics strategy – so convenient, in fact, that you might find you’ve racked up hefty expenses before you’ve actually done anything.

Here at Exasol, we mainly use AWS for setting up test systems. They often have to be created and torn down at a high frequency and they’re fairly complex. So it’s not surprising that people sometimes forget to clean up all resources after them.

But unless you create full transparency, those left-over resources accumulate over time until they make up a considerable part of your monthly AWS bill.

Tagging isn’t optional

To achieve this transparency we first established a mandatory AWS resource tagging guideline into our data analytics platform. This guideline helps our teams to uniformly tag and label all AWS resources. When it’s used with AWS resource groups, it enables us to unambiguously map resources to projects. With each project we can compare the actual costs with our estimations. And each resource has a distinct owner, who’s responsible for it.

The smart way to do your cost monitoring

Next, we set up AWS to create hourly cost reports in a CSV format. We regularly import these into one of our databases. This allows us to analyze the large amount of collected cost data in real time. We then use Tableau to monitor the results of those analyses.

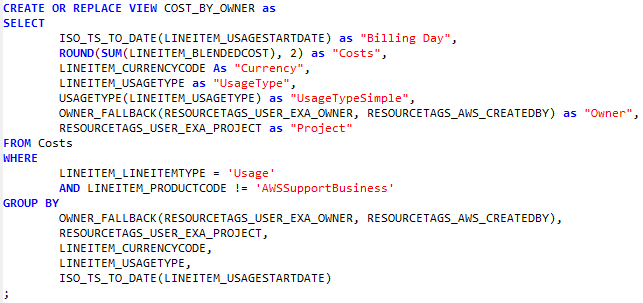

Here’s an example of one of the queries we run on the cost database:

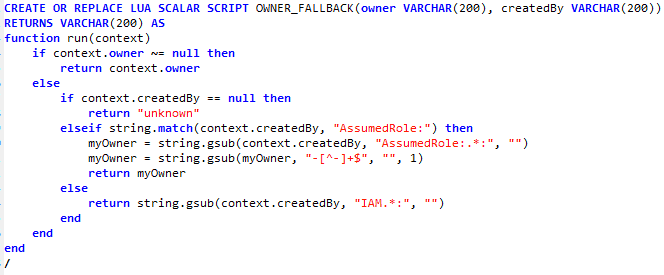

To make the SQL code more readable we extracted some functions into user defined scalar functions. For example, we set up a multistage fallback to determine ownership so we don’t have to worry if we miss something and it’s not tagged properly.

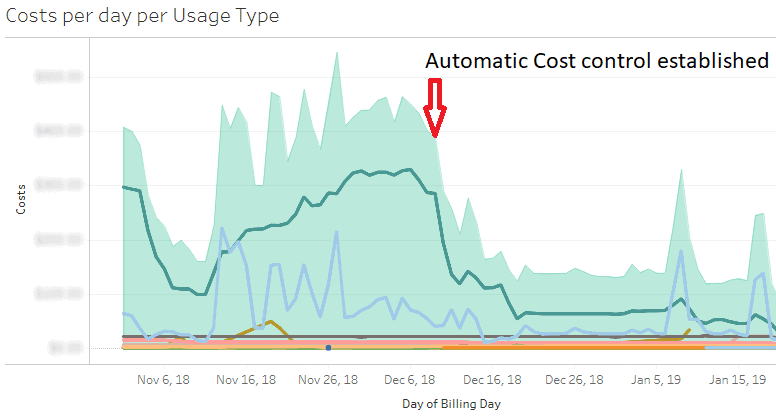

As you you can see in the cost diagram below, even if we hadn’t added a marker in the screenshot you could probably guess when our new cost control measures kicked in.

And now, all our resource owners get individual cost reports every week, detailing how much they’re spending on their AWS resources.

This message will automatically destroy your resources in 7, 6, 5 …

Of course it’s not enough to monitor your costs, you need to act on the results. So the next measure we implemented was scanning for untagged or incorrectly tagged resources using the Skew Python library. This library gives us read access to all the AWS resource metadata, like names and tags. That way you can easily query all services in your AWS account and get back a list of resources.

A Loading...Python script on top of Skew regularly identifies all resources breaking the tagging guidelines we set up. Owners have seven days to fix the reported tagging issues until the offending resources are deleted.

Planning + the right data analytics platform = the end to AWS bill shock

So it’s pretty clear that by using cloud computing without proper planning or right data analytics platform, you can quickly incur costs that are way higher than you originally anticipated. You need to establish a continuous cost monitoring regime in order to keep things under control. And as you’ve seen, by combining our own data analytics platform, AWS and Tableau – together with the right processes – we’ve found it easy to do that with relatively little effort.

If you want to learn more about how we can help you slash your AWS costs, please don’t hesitate to contact us. And, likewise, we’d love to hear from you if you have your own tips or insights on this topic.